Marketing to developers requires a different playbook. Traditional metrics like impressions or click-through rates don’t reveal whether your campaigns are connecting with this technical audience. Instead, you need to focus on product usage signals like API key creations, CLI installs, and GitHub engagement. These actions align with how developers evaluate and adopt tools.

To measure success effectively, you need a custom analytics stack. Why? Developers often bypass typical buyer journeys, starting with documentation or code examples, not landing pages. Plus, many use ad blockers, making them invisible to standard analytics tools.

Here’s how you can build a measurement stack that works:

- Focus on technical behaviors like API logs, CLI events, and documentation usage.

- Shift from Marketing Qualified Leads (MQLs) to Product Qualified Leads (PQLs), which convert 3–5x better.

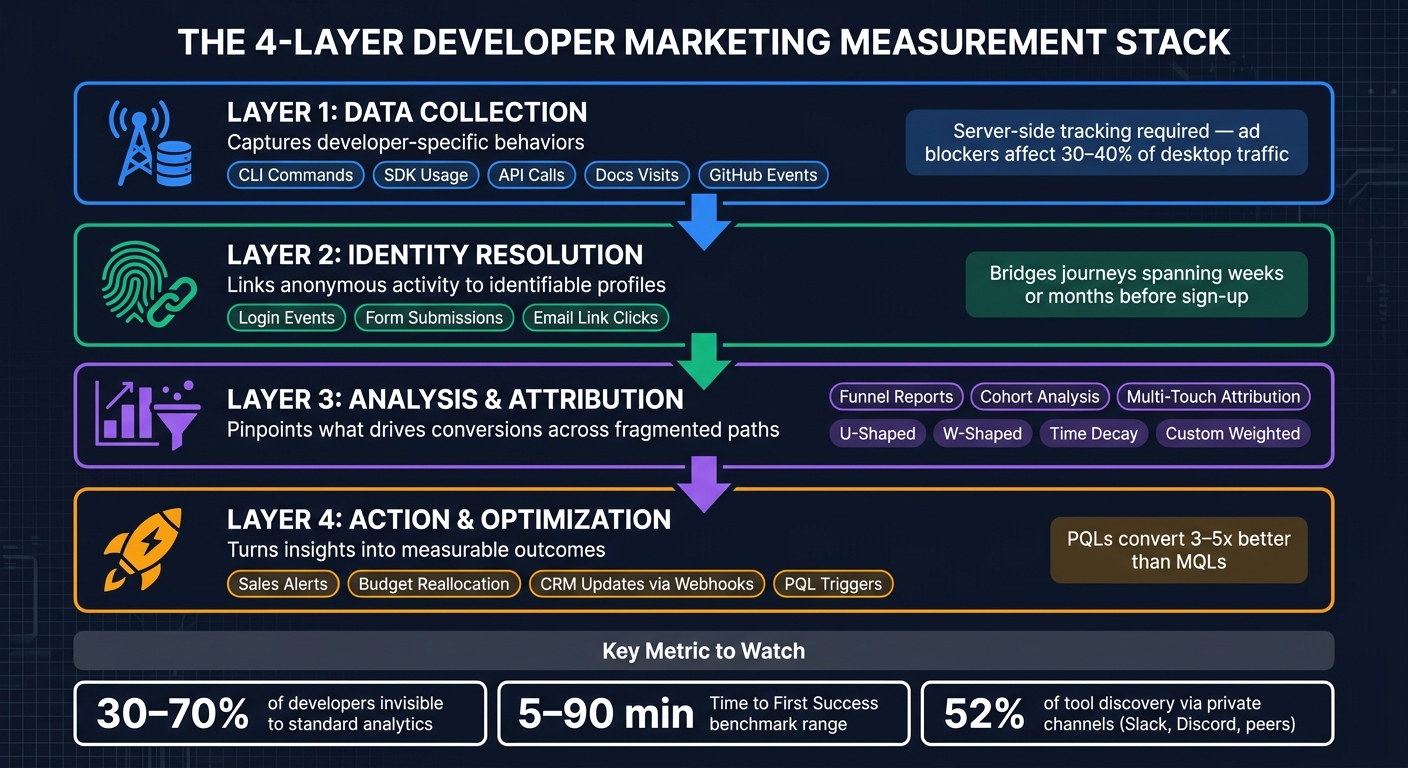

- Use a four-layer stack: data collection, identity resolution, analysis, and action.

- Set metrics based on the developer journey: awareness (GitHub views), evaluation (API key creation), adoption (steady API usage), and advocacy (peer recommendations).

- Track Time to First Success (TTFS) to identify onboarding friction.

This approach ensures you’re not just tracking vanity metrics but driving real engagement and adoption.

Setting Advertising Objectives and Metrics for Developer Campaigns

Define clear goals and metrics to make data-driven decisions that resonate with developers.

Matching Goals to Metrics

Developer campaigns often follow a four-stage journey: awareness, evaluation, adoption, and advocacy. Each stage requires specific metrics to track progress effectively.

At the awareness stage, focus on visibility where developers typically search for solutions. Metrics like GitHub repository views, clicks on problem-focused searches, and Stack Overflow mentions can provide insight. As developers move into the evaluation stage, look for activation signals such as API key creations, Quickstart completion rates, or SDK installations. In the adoption stage, consistent usage patterns - like your tool appearing in dependency lockfiles, CI/CD pipelines, or steady API call volumes - indicate success. Finally, advocacy is measured by developers recommending your tool to others.

"Impressions are the worst vanity metric you can think of. Someone scrolling past your social media post without properly noticing it still counts as an impression." - Milica Maksimović, Co-founder and COO, Literally.dev

Instead of relying on vanity metrics like impressions, track actions that reveal a developer's next move. For example, did they click from your blog to your documentation? Did they copy a code sample? These micro-actions provide deeper insights into engagement than raw numbers ever could.

The next step is to establish clear KPIs that tie these metrics to measurable outcomes.

Key Performance Indicators for Developer Campaigns

When setting KPIs, focus on two main categories: leading metrics and lagging metrics.

- Lagging metrics, like qualified signups or revenue, show long-term results.

- Leading metrics, such as engagement with documentation,

copy_code_sampleevents, and visits to high-intent pages (e.g., pricing or terms of service), highlight areas for immediate improvement .

One particularly useful KPI is Time to First Success (TTFS) - the time it takes a developer to go from setup initiation to achieving a working result. Benchmarks for TTFS vary by tool type:

- Auth/API libraries: 5–15 minutes

- Frameworks/SDKs: 15–45 minutes

- Infrastructure tools: 45–90 minutes

If your TTFS falls outside these ranges, it could indicate onboarding issues. Track this metric from the setup_started event to the setup_success event.

It’s also worth noting that 30% to 70% of developers may remain invisible to traditional analytics due to ad blockers or cookie opt-outs . To fill these gaps, use self-reported attribution methods to capture interactions from private channels .

With your KPIs in place, the next step is building a measurement plan that ties these metrics to your business goals.

How to Build a Measurement Plan

A well-structured measurement plan ensures you’re collecting the right data to support your business objectives, avoiding the trap of tracking metrics just because they’re available.

"You treat it as a product and measure ROI at a program level. You optimize activities within the programs but don't report ROI on them." - Jakub Czakon, CMO and Dev Marketing Advisor

The key is to anchor each metric to a specific business goal and developer behavior. Here’s an example:

| Business Goal | Developer Behavior | Metric | Data Source |

|---|---|---|---|

| Increase trial adoption | Testing the tool locally | API key creation / SDK init | Developer dashboard / server logs |

| Improve onboarding | Completing the "Hello World" | Time to First Success | Docs events / CLI telemetry |

| Drive awareness | Researching technical solutions | Clicks from problem-oriented searches | Google Search Console |

| Build community | Recommending to peers | Self-reported word-of-mouth | Signup form (free-text field) |

Coordination with sales and product teams is crucial when defining qualifying signups. For example, if marketing optimizes for raw signups while sales focuses on accounts that match your Ideal Customer Profile (ICP) and have completed a key product action, misalignment can occur . Agreeing on these definitions early ensures that your measurement plan stays aligned with business priorities, setting the stage for the custom measurement stack discussed in the next section.

sbb-itb-e54ba74

Building a Developer-Specific Measurement Stack

::: @figure  {Developer Marketing Measurement Stack: 4 Layers to Track What Matters}

{Developer Marketing Measurement Stack: 4 Layers to Track What Matters}

To effectively track and understand developer behavior, you need a measurement stack tailored to their unique interactions. This setup captures critical data points and bridges the gap between anonymous and identifiable actions, addressing the challenges of non-linear user journeys and limited visibility.

Core Layers of the Stack

A strong developer measurement stack is built on four interconnected layers :

- Data Collection: Focuses on capturing developer-specific behaviors like CLI commands, SDK usage, API calls, and documentation visits - signals that traditional analytics often miss.

- Identity Resolution: Links anonymous activity to identifiable profiles when users log in, fill out forms, or interact with email links. This is crucial for tracking journeys that can span weeks or months before a sign-up.

- Analysis & Attribution: Provides tools like funnel reports, cohort analysis, and multi-touch attribution to pinpoint what drives conversions across fragmented user paths.

- Action & Optimization: Turns insights into actionable steps, such as triggering sales alerts, reallocating budgets, or updating CRMs via webhooks tied to product milestones.

Since ad blockers affect 30–40% of desktop traffic , server-side tracking becomes essential for reaching technically savvy developer audiences. Relying solely on client-side scripts risks missing a significant portion of your audience.

These layers also enable seamless integration of campaign data, made possible through a standardized UTM schema.

Integrating daily.dev Ads Data Into the Stack

To incorporate daily.dev Ads data, enforce a consistent UTM schema during link creation. Define parameters like utm_source, utm_medium, utm_campaign, utm_content, and utm_term. Use an API-driven link generator to avoid errors and inconsistencies that manual entry can introduce .

"The goal is not just to shorten URLs; it is to build an analytics stack that receives clean campaign data automatically, syncs it into your CRM, and powers dashboard reporting that is accurate enough for decisions." - Jordan Vale, Senior SEO Content Strategist

Once the UTM structure is in place, daily.dev click events can be sent as standardized webhooks for real-time updates or batch-synced into a data warehouse for long-term analysis . The key is ensuring the same user ID is passed from the frontend (e.g., ad clicks) to the backend (e.g., API usage or product activation). Without this connection, it’s impossible to link an ad click to a developer who later becomes an active user .

Additionally, retain raw UTM parameters (e.g., utm_source=daily_dev) in your data layer while creating simplified, human-readable labels for dashboards. This keeps your data auditable while making reports easier to interpret .

Comparing Stack Components Side by Side

Each tool in the stack serves a specific purpose. Here's how they contribute to a developer-focused measurement pipeline:

| Component | Role | Strengths | Limitations |

|---|---|---|---|

| Web Analytics (e.g., Plausible, GA4) | Tracks top-of-funnel traffic and content engagement | Easy setup; great for referral and page-level trends | Blocked by 30–70% of developer audiences |

| Product & API Analytics (e.g., PostHog, Moesif) | Monitors feature usage, code copy events, and Time to First Success | Links marketing touchpoints to product value | Requires backend SDK integration and manual event tagging |

| Advertising Data (e.g., daily.dev Ads) | Tracks top-of-funnel reach and discovery signals | Useful for measuring acquisition and channel performance | Can become a vanity metric without linking to downstream conversions |

| CRM | Maps events to known contacts and accounts | Essential for sales alignment and lifecycle tracking | Overemphasizes direct traffic without proper UTM tracking |

| Data Warehouse | Centralizes data for long-term analysis | Flexible; supports reconciliation across tools | High setup and maintenance costs |

With the average enterprise marketing team using 91 cloud services , the challenge isn’t finding tools - it’s ensuring they work together seamlessly. Start by focusing on data collection and identity resolution, as clean inputs are the foundation for reliable downstream insights.

Setting Up Attribution Models and Developer Funnels

Once your data is clean and ready to use, the next step is to untangle the often complex journey developers take when interacting with your product. For example, a developer might find your tool on GitHub, explore the documentation, experiment locally, and then sign up. Standard attribution models often fall short in capturing this intricate path.

Attribution Models for Developer Campaigns

When it comes to developer marketing, three attribution models stand out as the most effective, depending on the type of campaign you’re running.

U-shaped attribution splits credit between the first and last touchpoints. This makes it ideal for campaigns focused on brand awareness, where both discovery and final conversion are critical. However, it overlooks important mid-funnel steps like API testing, documentation reviews, or GitHub engagement - key moments in a developer’s decision-making process.

W-shaped attribution goes a step further by adding a credit point at the lead creation stage. This model works well for longer sales cycles that involve a formal handoff from marketing to sales. While it’s more detailed than the U-shaped model, implementing it can be tricky when dealing with fragmented datasets.

Time-decay attribution prioritizes recent interactions, giving more weight to touchpoints closer to the conversion. This approach aligns well with developer tools, where successful API integrations or smooth onboarding experiences often play a decisive role in the days leading up to sign-up.

For product-led growth strategies, a custom weighted model offers the most precision. A good starting point might look like this: allocate 20% credit to the first GitHub interaction, 30% to documentation engagement, 30% to local testing, and 20% to the final conversion . This breakdown reflects the steps where developers typically invest their time.

It’s important to remember that no attribution model is flawless. As Jakub Czakon, CMO at Markepear, explains:

"You treat it as a product and measure ROI at a program level. You optimize activities within the programs but don't report ROI on them."

This approach shifts the focus from perfecting individual touchpoints to evaluating the overall success of your programs. With attribution models providing clarity on the impact of different interactions, you can design funnels tailored to the technical behaviors of developers.

How to Build Developer-Specific Funnels

With refined attribution insights, you can structure your funnel stages to align with the technical actions that developers take on their journey. Unlike traditional funnels that track page views, a developer funnel focuses on technical behaviors and is divided into three main stages: discovery, activation, and adoption.

- Discovery stage: Key indicators include GitHub repo views, how far users scroll through documentation, and engagement with content from platforms like daily.dev Ads.

- Activation stage: Metrics such as API key creation, quickstart guide completion, and Time to First Success (TTFS) - measured against established benchmarks - are crucial here.

- Adoption stage: Signals like recurring API calls, CI/CD pipeline usage, and mentions within internal teams suggest that the tool has moved from evaluation to regular use.

For instance, Datadog uses a Product Qualified Lead (PQL) definition that requires users to send at least 100 monitoring events and stay active for over seven days. This approach has resulted in a 22% PQL-to-paid conversion rate . PQLs often outperform traditional Marketing Qualified Leads, with conversion rates 3–5 times higher for developer-focused products .

Segmenting your funnel by developer personas - such as frontend versus backend, seniority, or tech stack - is equally important. A senior infrastructure engineer evaluating a deployment tool will interact differently than a junior developer exploring an API library. Combining these groups can distort your activation and conversion data.

Finally, complement your software-based attribution with a simple, open-ended question at signup: “How did you hear about us?” This helps capture insights from unmeasurable channels like private Slack groups, Discord servers, and peer recommendations, which account for 52% of developer tool discovery . To weed out low-quality responses, include a fake option like “Billboards” to catch misclicks .

Attribution Model Trade-offs at a Glance

| Model | Best Use Case | Advantages | Limitations |

|---|---|---|---|

| U-Shaped | Brand-focused campaigns | Highlights discovery and final conversion | Overlooks mid-funnel interactions |

| W-Shaped | Complex sales cycles | Credits discovery, lead creation, and conversion | Difficult to implement with fragmented data |

| Time Decay | Short-term trials | Prioritizes recent, high-intent interactions | Undervalues early education and brand building |

| Custom Weighted | Product-led growth | Reflects developer behaviors like GitHub and documentation usage | Needs extensive data integration and refinement |

Building Dashboards That Drive Action

Dashboards are where your measurement stack turns into actionable insights. The key is designing dashboards that deliver the right information to the right people. A generic, one-size-fits-all approach won’t work - each audience needs a tailored view.

Dashboards for Leadership

Leadership dashboards should focus on lagging metrics - those that reflect outcomes and justify investments. These metrics show whether your developer programs are delivering results. Key metrics to include are:

- Qualified signups

- Pipeline value driven by developer campaigns

- Win rate

- Net Dollar Retention (NDR)

NDR, which often averages between 110–130% for successful developer tools , is a strong indicator of long-term business health. It’s a metric executives need to see clearly.

Attribution at the team level is also critical. For instance, a developer experimenting with your tool today might influence a major enterprise purchase months down the road . If your leadership dashboard only tracks individual conversions, it will likely underestimate the broader business impact of your developer marketing efforts.

Meanwhile, marketing teams need dashboards that focus on leading indicators, helping them fine-tune campaigns and engagement strategies before results are reflected in revenue.

Dashboards for Marketing Teams

Marketing dashboards should highlight leading metrics - early signs that provide a snapshot of how campaigns and onboarding flows are performing. Important metrics include:

- Documentation engagement depth

- API key creation rates

- Time to First Success (TTFS)

- Campaign performance data, like click-through rates from daily.dev Ads

Metrics like scroll depth and page progression in your documentation aren’t just vanity stats. Developers who engage deeply with your docs are 4x more likely to convert . Similarly, monitoring error rates during the "getting started" flow is essential. High friction at this stage can quietly derail activation rates long before it reflects in your signup numbers . Setting alerts for sudden drops in technical engagement ensures you can act quickly to address issues.

Dashboard Layout and Reporting Schedules

To create a cohesive reporting system, organize dashboards by funnel stage rather than by team or channel. This structure aligns with the developer journey and makes it easier to identify bottlenecks. Here’s a breakdown:

| Funnel Stage | Primary Audience | Key Metrics | Decision Support |

|---|---|---|---|

| Awareness (TOFU) | Marketing | GitHub repo views, docs navigation depth, daily.dev Ads impressions | Optimize content and channel spend |

| Activation (MOFU) | Marketing / Product | API key creation, TTFS, quickstart completion rate | Identify and reduce onboarding friction |

| Adoption (BOFU) | Leadership / Sales | Qualified signups, revenue pipeline, influenced revenue | Allocate budget and scale programs |

For reporting schedules, align the frequency with how each audience uses the data:

- Weekly: Marketing practitioners should review campaign-level performance and experiment results.

- Monthly: Marketing managers and CMOs can focus on program-level ROI and budget adjustments.

- Quarterly: Executive leadership and finance teams should evaluate long-term growth trends and refine strategic plans.

Finally, add a self-reported attribution field to capture qualitative insights. A simple "How did you hear about us?" question during signup can uncover discovery channels like private Slack groups, Discord servers, or peer recommendations. These sources account for 52% of developer tool discovery and often go unnoticed by software-based tracking. Regularly reviewing this qualitative data provides context that numbers alone can’t .

Maintaining Data Quality, Privacy, and Ongoing Improvement

To get the most out of your custom measurement stack, keeping data accurate and ensuring strong compliance measures are non-negotiable. As developer behaviors shift, your systems must adapt to stay relevant.

Data Governance and Standardization

The effectiveness of your measurement stack hinges on the quality of its data. Without proper structure, analytics can quickly spiral into chaos - misnamed events, lost properties, and eroded trust among teams.

A well-documented tracking plan can make all the difference. By maintaining consistent naming conventions and assigning clear ownership, you can reduce data errors by up to 90% and speed up analytics deployment by 2–3 times .

"Every analytics implementation starts with good intentions and ends in chaos without a tracking plan." - KISSmetrics Editorial

Consistency is key when it comes to naming conventions. The specific style you choose matters less than applying it uniformly across your tools:

| Naming Pattern | Example | Best For |

|---|---|---|

| Title Case | API Key Generated | Human-readable reports |

| snake_case | api_key_generated | Database/engineering teams |

| camelCase | apiKeyGenerated | JavaScript-native implementations |

In addition to naming conventions, assign a clear owner to each event. This way, when data quality issues arise, someone is accountable for fixing them. Conduct monthly audits to catch issues like naming inconsistencies, missing fields, or broken configurations before they affect your reports.

Clear data protocols also lay the groundwork for strong privacy practices.

Privacy and Compliance in Developer Analytics

Developers are quick to adopt privacy measures. For instance, 30% of developers are invisible to client-side analytics due to ad blockers, and this number jumps to 70% when cookie consent is factored in. This presents both a measurement challenge and a compliance concern.

One effective solution is server-side tracking. By collecting events through authenticated server-side API calls instead of relying on browser pixels, you can bypass the limitations of cookies, which developers often block. Combine this with a first-party data strategy - use your own database UUIDs as persistent identifiers and link anonymous pre-signup activity to known accounts once authentication occurs.

From a compliance perspective, avoid including personal identifiers in your analytics "hot path." Instead, use service, team, or repository IDs whenever possible. If individual-level data is necessary for debugging, isolate it in workflows that automatically expire and log access. For aggregated dashboards, enforce a minimum cohort size of five users to prevent re-identification. Transparency is also crucial - when developers understand how their data is used and see value in return, opt-in rates for consent-based attribution programs range from 65–80%, compared to 15–25% for general consumers .

With strong governance and privacy measures in place, your stack transforms into a tool for both accurate measurement and continuous improvement.

Using the Stack for Testing and Iteration

Once your data governance and privacy protocols are solid, your measurement stack can drive ongoing testing and iteration. It’s not just about monitoring; it’s about using data to refine and improve developer campaigns.

The process is straightforward: analyze the data, fine-tune specific activities, reallocate budgets to better-performing channels, and repeat . Use leading metrics like documentation engagement, CLI install rates, and Time to First Success (TTFS) to identify areas for improvement before they impact revenue. For budget decisions, focus on lagging metrics like qualified signups and pipeline value. Mixing these two types of metrics can lead to confusion about what you're optimizing.

When running A/B tests, approach budget adjustments as experiments. For example, shifting 10–15% of your spend from low-ROI channels to high-ROI ones allows you to test scalability without overhauling your entire program . For onboarding experiments, if developers aren’t achieving results within the expected benchmarks, prioritize improvements in that area. This could mean offering clearer code examples, simplifying quickstart guides, or reducing setup friction.

Conclusion

Measuring success in developer marketing is an ongoing effort. To do it right, align your metrics with every stage of the developer journey - starting from discovery and moving all the way to full production integration.

Rather than focusing on vanity metrics, prioritize indicators of genuine engagement. Look at metrics like the depth of documentation usage, API key creations, Time to First Success (TTFS), and CI/CD integration. As Mohammed Tahir, Developer Marketer, explains:

"The purpose of developer marketing metrics is to understand whether developers are progressing forward. If they are not, the work is to find where friction is happening and reduce it."

Building a layered measurement stack is key. Combining tools like product analytics, server-side tracking, community engagement platforms, and self-reported attribution gives you a much fuller picture than relying on a single tool.

FAQs

Which 5–10 developer actions should I track first?

To get a clear picture of how developers are engaging with your product, start with developer engagement metrics. Keep an eye on things like how often APIs are being called, activity levels on GitHub, and how frequently your documentation is being viewed. These numbers can reveal how developers are interacting with your tools.

Next, dive into product usage metrics. Look at data such as SDK implementation rates, how often key features are being activated, and the completion rates for onboarding processes. These figures help you gauge how well your product is being adopted.

Finally, don't stop at clicks when analyzing user behavior. Track time-on-ad interaction, including how long ads are visible and the amount of active engagement time. These metrics provide a deeper understanding of user interest and interaction.

How do I connect anonymous doc visits to later product usage?

To link anonymous document visits to product usage, focus on tracking developer-specific behaviors. These can include GitHub interactions, documentation engagement levels, and API usage trends. These signals help map out user journeys, showing how curiosity evolves into active product usage.

Leverage tools like UTM parameters and API call data to bridge the gap between initial visits and later product activity. This approach offers a clearer picture of how users engage with your product and its technical resources.

What’s the simplest way to measure Time to First Success (TTFS)?

The easiest way to measure Time to First Success (TTFS) is by determining how long it takes for a developer to reach a key milestone after engaging with your ad or campaign.

Here’s how you can approach it:

- Decide what "First Success" means: This could be something like completing the first API call or finishing an onboarding step.

- Track interactions and milestones: Use analytics tools to record timestamps for both the initial interaction and the milestone achievement.

- Measure the time gap: Subtract the initial interaction timestamp from the milestone timestamp to calculate the TTFS.

This straightforward process provides a clear picture of how quickly developers are progressing after their first engagement.